1. Introduction

In Uruguay, a large part of dairy production is carried out in small farms where the family develops most of the activities. These farmers need more investment capacity to install complex effluent handling systems, which include time-consuming management 1)(2) . In parallel, commercial fertilizers are applied to crops and pastures intended for animal feed. In this context, the productive use of effluents and their contribution to nutrients can play a crucial role in ensuring the sustainability of the dairy production system, avoiding environmental risks derived from inadequate management practices 1)(2) .

The effluents are generated during cleaning facilities (milking parlor, waiting, and feeding pens). Because a large volume of water is used (between 30 and 50 L/cow/day)3, the effluents have low concentrations of organic matter (OM) and nutrients4. However, since many of the nutrients are in readily available forms, effluent irrigation can meet the nutritional requirements of crops and pastures through repeated applications 5)(6) . It should also be noted that the effluents provide small amounts of secondary nutrients and micronutrients, which can contribute to the sustainability of the production system, replacing the exports made with products7.

One of Uruguay's most common effluent management systems consists of lagoon storage to be later used for irrigation. After washing the facilities, the effluents are generally channeled to a solids' retention system and then led to the pools or lagoons. Treatment systems with several lagoons were used in the past, the first being very deep for the anaerobic treatment of effluents. This treatment aimed to reduce the organic load of the effluents, which were discharged into watercourses. Direct discharge into watercourses is not allowed, lessening the need for treatment. Due to the high cost involved in constructing and maintaining large pools or lagoons, storage capacity is often limited, and, therefore, storage time is relatively short. It should be noticed, however, that a wide range of situations can be found, from applying effluents immediately after generation to storing effluents in lagoons that are removed after several months.

The characteristics of the storage system, especially the residence time, influence the biological processes and, therefore, the characteristics of the material that will be utilized for irrigation. While fresh effluents generally have higher OM contents, effluents stored for some time have undergone stabilization processes. During storage, the mineralization of the most labile fractions of organic substances occurs, releasing inorganic forms of the nutrients and decreasing carbon content 8)(9) . Indeed, it has been reported that by treating effluents in anaerobic lagoons, the pathogen load and the viability of weed seeds can be reduced, although with variable results, depending on the management10.

Due to its origin, with a significant manure component, dairy effluents carry many microorganisms, some of them pathogens. This represents a dissemination risk when crops and pastures are irrigated with effluents, especially those intended for direct grazing with lactating cows11. Generally, it is recommended to impose restrictions to observe a specific waiting time between applying effluents and grazing12. However, measurements of pathogen survival in the dairy production systems in Uruguay are scarce, and given the expected climate and soil influence, it is important to provide local assessments.

Bacterial resistance to antibiotics has also become a severe problem among pathogenic bacteria, which has led to an increased concern surrounding environmental risks and the potential spread of antibiotic resistance among microorganisms13. Resistance is typically common where antibiotics are heavily used. However, in Uruguay, the use of antibiotics as growth promoters for cattle is banned, and they are used in dairy farms mainly for mastitis cases14. Antibiotics are not completely metabolized in the body, and a significant fraction of the antibiotics administered to humans and animals are excreted unchanged (17-90%)15. Once an antibiotic is released into the environment, its behaviour and fate will be determined by its intrinsic properties, soil characteristics, and weather conditions.

Antibiotic resistance selection occurs among gastrointestinal bacteria, which are also excreted in manure and stored in lagoon systems. Although it is well known that dairy manure storage reduces pathogen number, it is unclear what effect storage treatments have on antibiotic resistance genes (ARGs) persistence in dairy effluents.

This work aimed to evaluate the effects of the application of untreated dairy effluents and a two-lagoon stabilized system to tall fescue in a greenhouse experiment examining the soil and plant biomass nutrient content, and the potential sanitary risks, by microbial measurements and the persistence of some ARGs.

2. Materials and methods

2.1 Experimental design

The greenhouse pot experiment was maintained for eight months. The soil was collected from the topsoil layer (0-15 cm depth) of a field in the Faculty of Agronomy (34°36'48" S, 56°12'54" W). The soil was a Mollisol with 5.0%, 70.9%, and 24.1% of sand, silt, and clay, respectively. The soil pH was 6.4, and the exchangeable cation content was K 2.0, Ca 14.0, Mg 3.1, and Na 0.3 cmolc kg-1.

Pots containing 4 kg of homogenized soil were sown withFestuca arundinaceacv. Tacuabé (four plants per pot), which seeds were supplied by the National Seeds Institute (INASE). The experiment had a randomized block design with three replicates, according to Illarze4. The N fertilizer treatments included four applications to the soil at an equivalent rate of 50 kg N ha-1, i.e., 20 mg N kg of pot soil-1 consisting of (1) two-lagoon stabilized dairy effluent (LDE), (2) raw dairy effluent (RDE), (3) synthetic nitrogen fertilizer (urea), and (4) a non-amended soil (control). All applications were performed in solution, adjusting the same final volume per pot, and the same amount of tap water (pH 6.9, electric conductivity (EC) 2 mS cm-1) was added to the control. The first application was at seeding. Consecutive fertilization/harvesting cycles of 45 days were performed three times, simulating the typical management of tall fescue forage cuts. The final cut was done two months after the last application of treatments. The tall fescue forage was cut manually at 5 cm height.

Dairy effluents were collected from the dairy farm of Centro Regional Sur from the Faculty of Agronomy, with geographic coordinates 34°36'47.83" S and 56°12'54.00" W. The collection of effluents and their characterization is described in Illarze4. Although variable in composition throughout the year, dairy effluents had a total solid content lower than 2% and suspended solids less than 1%. The organic carbon (OC) content of RDE was higher than that of LDE except in the January samples4. The RDE applied in the experiment presented an average pH of 8.0, 1325 mg L-1 of total carbon, 371 mg L-1 of total N, and 98 mg L-1 of total ammoniacal N, and LDE had an average pH 8.4, 425 mg L-1 of total carbon, 194 mg L-1 of total N, and 63 mg L-1 of total ammoniacal N.

The selected farm followed the usual production practices in the country and used beta-lactam antibiotic agents to treat bovine mastitis.

2.2 Chemical soil analysis

At the end of the experiment, after two months of the fourth application of DE, soil samples were collected from each pot, and chemical properties were determined. The soil was dried at 45 °C until a constant weight was reached. The total exchangeable bases, Ca, Mg, K, and Na, were extracted with ammonium acetate buffered at pH 716. The K and Na were determined by atomic emission spectrometry, and Ca and Mg by absorption spectrometry. The available P content was determined by the Bray 1 method17.

2.3 Soil basal respiration

Soil samples were collected immediately after and at 7, 30, and 45 days from DE applications and stored at 5 °C before analysis.

The soil basal respiration was measured by titration according to Öhlinger18 with slight modifications. 15 g of soil were incubated in a closed vessel for 10 days at 25 °C in the dark. The CO2 was trapped in NaOH solution (0.25 M) and titrated against 0.1 HCl after freshly prepared saturated BaCl2 (0.5 M) was added. The respiration rate was expressed as mg of CO2 g-1 dry soil d-1. Cumulative basal respiration until 45 days of each application date was calculated by adding all mg CO2 g-1 dry soil d-1 mean values for each sampling date multiplied by the number of days between these dates.

2.4 Chemical analysis of tall fescue

The aboveground biomass obtained at the final herbage cut was dried at 65 °C for 48-72 h (until constant weight) and ground to pass through a 0.5-mm mesh. After that, the total N content was determined by the Kjeldahl method19. P, K, Ca, Mg, and Na were extracted with dilute HCl (20%) in samples calcined at 550 °C for 5 h. The molybdenum blue method was used to determine the total P with ascorbic acid20. The Ca and Mg elements were determined by atomic absorption spectrometry, and K and Na by atomic emission spectrometry21.

2.5 Quantification of pathogenic bacterial indicators

Total coliforms and E. coli numbers were determined in DE by a ten-fold dilution series of 10 mL of effluent in 90 mL of 0.01 M phosphate buffer, followed by agitation for 30 min in an orbital shaker at 200 rpm. The diluted samples were plated in triplicate on 3M™ E. coli/ Coliform Petrifilm™. After incubation at 37 °C for 24 h, characteristic blue colonies were counted as E. coli and red as total coliforms (CFU/100 mL).

Total coliforms and E. coli numbers were determined in the soil immediately after DE application and 45 days after each application, and expressed as log10 CFU g-1 dry soil. For data analysis, the number of viable bacteria below the detection limit (10 CFU g-1 soil) was set at 1 log CFU g-1 dry soil. The E. coli and total coliforms survival results were calculated as C/Co values, where Co is the applied concentration in the irrigation solution and C is the concentration in the soil after 45 days of each application of dairy effluents.

2.6 DNA extraction and quantification of ARGs

Total DNA was extracted from the dairy effluents and soil samples using the Qiagen DNeasyPowerSoil Pro Kit (Qiagen, Carlsbad, CA, USA). For soil, 0.25 g samples were processed according to the manufacturer’s instructions. In dairy effluent samples, 50 mL were centrifuged in 50 mL Falcon tubes at 10,000 × g for 10 min at room temperature to obtain pellets before bead beating.

The DNA concentration and purity were determined with NanoDrop® 2000c UV-vis spectrophotometry (Thermo Scientific, USA). The extracted DNA samples were stored at −20 °C before PCR amplification.

Three beta-lactam resistance genes (blaTEM, blaOXA, and blaSHV) were chosen to assess the presence of the genes in the samples and their quantification.

Primers for each gene were those published in Dallenne and others22. For blaTEM: forward primer 5’-CAT TTC CGT GTC GCC CTT ATT C-3’ and reverse primer 5’- CGT TCA TCC ATA GTT GCC TGA C-3’; for blaOXA: forward primer 5’-GGC ACC AGA TTC AAC TTT CAA G-3’ and reverse primer 5’-GAC CCC AAG TTT CCT GTA AGT G-3’; for blaSHV: forward primer 5’-AGC CGC TTG AGC AAA TTA AAC-3’ and reverse primer 5’-ATC CCG CAG ATA AAT CAC CAC-3’.

Purified PCR products for each of the genes analyzed were used as templates for the calibration curves. The calibration curves consisted of five ten-fold dilutions of the template; each dilution point was amplified by triplicate. The DNA concentration of the templates was obtained by spectrofluorometry (QuBitTM, ThermoFischer).

The qPCRs for each gene were run separately and not in a multiplex fashion. The Maxima SYBR Green qPCR Master Mix was used (ThermoFischer). The mix for each qPCR assay contained 2 μL of the extracted DNA and 10 mM of each primer. Runs were performed on a Rotor-Gene-Q Pure Detection machine manufactured by QIAGEN. The cycling conditions were an initial denaturing step of 10 minutes at 95 °C, followed by 40 cycles of 15 seconds at 95 °C, 20 seconds at 60 °C, and 20 seconds at 72 °C; the fluorescence was measured at the end of each cycle. A melting curve was performed at the end of the run beginning fluorescence measurement at 72 °C and incrementing 1 °C in each step until 95 °C.

2.7 Statistical analysis

One-way analysis of variance (ANOVA) followed by Fisher’s least significant difference (LSD) test was used to examine the effects of treatments on the soil chemical composition, plant yield and nutrition, bacterial indicators, and the abundance of ARGs. Each parameter was tested for normality of distribution and variance homogeneity using Shapiro-Wilk’s test and Levene’s test, respectively. When transformed data, such as pH, was also not normal, it was analyzed using the non-parametric Krustal-Wallis test followed by a Wilcoxon Rank Sum test to determine significant differences between treatments.

Data were expressed as means ± standard error. All statistical analyses were performed using Infostat23, and the significance level was considered at p<0.05.

3. Results and discusión

3.1 Effect of DE applications on soil chemical properties and plant biomass and nutrition

In general, the soil application of organic amendments like DE increases its fertility, especially N, P, and K 24)(25)26)(27) 28. In this pot trial, with a N fertilization dose equivalent to an annual 200 kg N ha-1, the content of exchangeable cations, such as Na, K, and Mg, increased in the soil after LDE applications relative to the control (Table 1). Additionally, Na and NO3 --N content increased in soil with RDE applications relative to the control soil (Table 1).

Table 1: Soil properties of fescue pots with four repeated applications of raw (RDE) or lagoon dairy effluent (LDE), urea or non-amended soil at the end of the experiment (mean n=3 ± S.E). Different letters within a column indicate significant differences between treatments (p<0.05)

| Treatment | pH | OC% | NO3 --N (mg kg-1) | NH4 +-N (mg kg-1) | P-Bray1 (mg kg-1) | Ca++ (cmolc kg-1) | Mg++ (cmolc kg-1) | K+ (cmolc kg-1) | Na+ (cmolc kg-1) |

| Control | 7.4± 0.4 b | 3.0± 0.1 | 5.7± 0.1 a | 11.8± 4.0 | 78.3± 4.1 | 14.2± 0.3 | 3.6± 0.1 a | 1.5± 0.0 a | 1.0± 0.0 a |

| Urea | 7.0± 0.0 a | 3.1±0.1 | 7.5± 0.2 ab | 15.5± 1.6 | 80.0± 3.5 | 14.4± 0.3 | 3.6± 0.0 ab | 1.4± 0.0 a | 1.1± 0.1 a |

| RDE | 7.1± 0.2 ab | 3.1± 0.1 | 8.7± 1.1 b | 17.6± 1.4 | 77.5± 2.2 | 14.2± 0.2 | 3.6± 0.1 ab | 1.5± 0.1 a | 1.5± 0.0 b |

| LDE | 7.1± 0.2 ab | 3.0± 0.1 | 6.2± 0.3 a | 15.4± 1.0 | 76.7± 9.8 | 13.8± 0.2 | 3.8± 0.0 b | 1.8± 0.1 b | 1.5± 0.1 b |

High concentrations of K present a challenge in achieving an agronomic balance of nutrients when applying effluent to land29, potentially causing an imbalance in Mg and Ca in animals. A study on the land application of two types of DE demonstrated that both plant biomass and soil K levels increased under effluent treatments, as the amount of K applied in DE exceeded tall fescue maintenance requirements at rates above 100 kg N/ha/year30. The increase of K levels in soils after the addition of LDE may then pose a problem for land application; however, in the current case, the nutrient content in the plant biomass with applications of DE did not exceed that of the control or the urea treatments (Table 2). The grass stagger index (GSI = (K+)/((Ca2+) + (Mg2+))), used as an index of the nutritive quality of forage, was very low and never exceeded 2.2, pointed as the risk of hypomagnesemia31.

Long-term application of effluents may lead to the accumulation of exchangeable Na in the soil. Although monovalent cations are generally less strongly held on cation exchange sites than divalent ones and do not pose a risk to the receiving soil, a decrease in exchangeable Ca and Mg has been reported31. This effect cannot be detected in this pot trial but must be considered in the field.

While this study focused on N, dairy manure also contained high levels of P, although the final soil P content was not modified by DE addition (Table 1). In this case, the high nutrient status of the soil used for this pot trial could have masked the effect of DE nutrient input on the soil.

Dairy effluents typically exhibit alkalinity, and numerous works indicate that effluent irrigation increases soil pH in acid soils32. In this study, the soil pH was close to neutrality, and the application of DE did not affect soil pH relative to the control soil (Table 1). Conversely, urea fertilization led to a decrease in soil pH. This acidification effect due to excessive use of synthetic N fertilizer has previously been reported33.

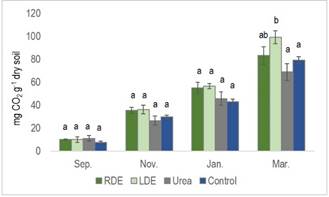

The percentage of OC input from RDE generally surpassed that from LDE4, and the application of DE contributes to a long-term increase in the soil OC content32. Even though our results did not reveal an increase in soil OC content (Table 1), the cumulative basal respiration at the last application of LDE was significantly higher. There was a similar tendency for RDE in the preceding two applications (Figure 1).

Figure 1: Cumulative basal respiration of soil with the application of raw dairy effluent (RDE) or lagoon dairy effluent (LDE), urea, and non-amended soil at the four-application events. Columns represent means ± standard errors (n=3). Columns with different letters indicate a significant difference between treatments on each sampling date (P<0.05)

The cumulative basal respiration of the soil, considering all four applications in the control and urea treatment, averaged less than 40 mg CO2 g-1 dry soil, whereas in the LDE and RDE treatments, it ranged from 46 to 50 mg CO2 g-1 dry soil. This finding aligns with Liu and others34, who reported that soil basal respiration with organic amendments like farmyard manure and green manure was higher than with NPK treatment.

Soil respiration, widely employed to quantify microbial activities in soil 35)(36) , generally exhibits a positive correlation with soil OM content36. In accordance, the application of these two DE in the same pot experiment showed an enhancement in microbial activity4.

The application of organic materials with a low dry matter content, as observed in our case, resulted in few measurable changes in soil properties in the short term37 and may induce a priming effect, leading to the mineralization of native soil OM and the potential decrease of soil OC38. Therefore, the increase in soil basal respiration obtained in this study may be attributed to the priming effect induced by the OC input from DE.

The tall fescue yield at the fourth cut of the experiment was higher for RDE than for LDE application (Table 2). However, neither the N content of the plant biomass nor the N uptake after repeated applications of DE differed from urea fertilization or the control (Table 2). In this same pot trial, the authors of the present study verified that the repeated applications of these DE did not result in detectable changes in N uptake or yield (sum of four herbage cuts)4. Furthermore, this study evaluated the chemical composition of other crucial macronutrients in plant biomass that showed similar levels between treatments. These findings once again highlight the basal nutrient status of the soil as the factor responsible for masking the effect of DE or urea fertilization on plant nutrient content and yield.

Table 2: Yield, N uptake and nutrient content of F. arundinaceagrown in pots with application of raw (RDE) or lagoon dairy effluent (LDE), urea or non-amended soil (mean n=3 ± S.E), at the last forage cut. Different letters within a column indicate significant differences between treatments (p<0.05)

| Treatment | Pasture yield (g DM pot-1) | N uptake (g pot-1) | N (g kg-1) | P (g kg-1) | Ca++ (g kg-1) | Mg++ (g kg-1) | K+ (g kg-1) | Na+ (g kg-1) |

| Control | 17.3± 9.8 ab | 0.35± 0.03 | 20.1± 1.9 | 3.6± 0.3 | 4.0± 0.0 | 3.8± 0.2 | 15.4± 1.1 | 1.9± 0.3 |

| Urea | 20.1± 2.2 ab | 0.44± 0.07 | 21.9± 1.2 | 2.4± 0.4 | 3.4± 0.3 | 3.4± 0.2 | 17.6± 0.4 | 1.1± 0.1 |

| RDE | 20.8± 2.7 b | 0.41± 0.01 | 19.7± 0.4 | 3.0± 0.2 | 3.7± 0.1 | 3.7± 0.2 | 20.3± 2.5 | 1.5± 0.4 |

| LDE | 16.8± 5.2 a | 0.32± 0.03 | 19.1± 1.6 | 3.3± 0.2 | 3.9± 0.2 | 3.8± 0.1 | 17.1± 0.9 | 1.9± 0.2 |

3.2 Bacterial indicators of soils with DE applications

By determining the number of pathogenic bacterial indicators in DE and assessing the spread and survival in the soil, we aimed to estimate the health risks associated with the land disposal of DE.

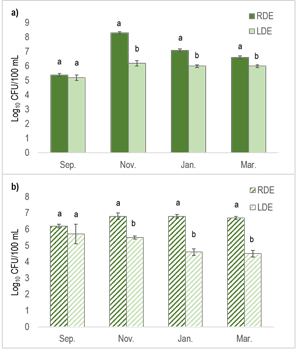

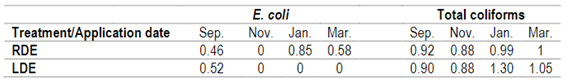

Previous research has demonstrated that the number of fecal bacteria in manure slurries can be reduced through long-term storage39. Consistent with this, our current study revealed that the total coliforms and E. coli numbers were higher for RDE than for LDE, except for the September sampling date (Figure 2). The difference in total coliform number between both effluents reached its maximum in November (2.0E+08 vs 1.7E+06), and for E. coli in January and March (on average 3.5E+07 vs 2.0E+05). These results indicate a substantial removal of enteric bacterial pathogens through lagoon storage.

Figure 2: Numbers of total coliforms (a) and E. coli (b) in raw dairy effluent (RDE) or lagoon dairy effluent (LDE) at the four-sampling dates. Columns represent means ± standard errors (n=3). Columns with different letters indicate a significant difference between dairy effluent types at each sampling date (p<0.05)

The seasonal variability in the chemical composition of both DE has been reported in our previous work4. For the September sampling (end of the cool season), RDE did not differ from LDE in dry matter content and recorded lower total C than in the other sampling dates. This may account for the lack of differences in the number of photogenic bacterial indicators at that date.

Upon the application of DE to the soil, significantly higher E. coli numbers were accounted for RDE-treated soil compared to the control and urea treatments (Figure 3).

Figure 3: Average number of total coliforms (a) and E. coli (b) in soil with the application of raw dairy effluent (RDE) or lagoon dairy effluent (LDE), urea or control, immediately after and 45 days from applications. Columns represent means ± standard errors (n=3). Columns with different letters indicate a significant difference between treatments for each day (P<0.05)

After 45 days from its application, E. coli number decreased by nearly two orders of magnitude (from 2.5E+04 to 2.3E+02), yet it remained significantly higher than in the other treatments (Figure 3). On the other hand, the count of total coliforms did not rise at the time of DE applications and remained nearly constant after 45 days of applications. Nevertheless, soil treated with RDE had a significantly higher number of total coliforms than the control after 45 days of DE applications (Figure 3).

Table 3 shows the survival of E. coli introduced with the effluents in the soil after 45 days of each application. E. coli was undetectable after repeated LDE applications but was counted in the pots treated with RDE. No differences were determined in the survival of total coliforms for both DE applications (Table 3). Several studies have indicated that coliforms can survive and proliferate in extraintestinal environments, and their role as indicators has been questioned40.

Table 3: Survival of E. coli and total coliforms in fescue pot soil with the application of raw (RDE) or lagoon dairy effluent (LDE) for each of the four applications. Ratio of number of pathogenic bacteria indicators after 45 days of application to number applied with effluents

Results from a field lysimeter study showed that the land application of the treated effluent can lead to significant reductions in E. coli leaching compared with the untreated original DE41.

It has been established that dairy effluent application to soil generally promotes the numbers and survival of enteropathogenic bacteria 30)(42) . Our results suggest a low-level persistence of enteric bacteria following RDE application to the soil, indicating that more than 45 days are required for the complete elimination of indicator bacteria.

In Uruguay, a wait time after DE application of 21-30 days before grazing is recommended, and grazing is advised to be avoided with categories of less than 1-year-old and pre-calving cows43. The present results confirm the pertinence of this recommendation.

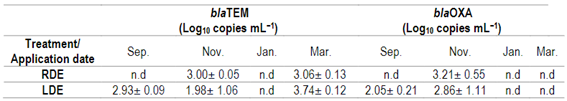

3.3 Occurrence of ARGs in DE and amended soil

The fate of ARGs spread after DE applications to soil was assessed by targeting three beta-lactam ARGs. Resistance to beta-lactam antibiotics is an increasing concern, and beta-lactamase production is the most common mechanism for this drug resistance22.

A growing number of cases of multidrug-resistant bacteria producing beta-lactamases have been reported in Uruguayan dairy farms 14)(44) 45. Numerous studies have proved that DE serves as a source of ARGs and may play a key role in the dissemination to the soil 46)(47) 48.

The presence of blaTEM and blaOXA was detected in at least one sampling date in RDE or LDE (Table 4), while blaSHV was not detected. However, neither of the beta-lactam ARGs could be later observed in the DE-amended soils at the end of the experiment (data not shown). These results indicate that DE applications did not lead to the dissemination of these ARGs in the soil. The decline over time following applications suggests that microbes possessing beta-lactam ARG had somewhat limited survival potential in the soil environment46, probably due to their lack of adaptation to the soil characteristics and the inhibition by soil indigenous bacteria 49)(50) .

Table 4: Abundance of blaTEM and blaOXA genes in raw (RDE) or lagoon dairy effluent (LDE) at four-sampling dates (mean n=3 ± S.E)

n.d = not detected

In this study, the blaTEM gene was present in November and March in RDE samples, and in LDE it was also detected in September (Table 4). On average, the blaTEM abundance was 3.5 × 103 and 8.8 × 102 copies mL−1 for LDE and RDE, respectively, with no significant differences. The blaOXA was only present in November in RDE samples, and its abundance was not significantly different from LDE. On average, the blaOXA abundance was 1.6 × 103 and 2.1 × 103 copies mL−1 for LDE and RDE, respectively.

Neither of the beta-lactam ARGs was detected in the DE in January, the warmest sampling date (Table 4). According to these findings, Schages and others51 reported a seasonal effect of ARG abundance in wastewater, with ARG abundance negatively correlated with warmer temperatures. Furthermore, the authors found that antibiotic-resistant bacteria were also more prevalent in the colder seasons.

The occurrence of both beta-lactam ARGs was more frequent in LDE than in RDE. This was expected, as the RDE sampling represents one day flush of the dairy barn floor, while the LDE sampling represents annual dairy effluents disposal, where excreted antibiotics can continue exerting selection pressure for antibiotic-resistant genes52. So, lagoon storage does not remove ARGs in this case, although qPCR does not provide information on the expression of these genes53.

Further studies are mandatory to determine the presence of other ARGs that confer resistance to other antibiotics used in the country to treat mastitis. However, our initial work is promising regarding the release of DE to pastures where the beta-lactam ARGs could not be detected.

4. Conclusions

No difference in tall fescue nutrient content was observed after DE application in this short-term pot trial performed with rich soil. Besides, DE application to the soil did not result in significant changes in soil nutrient content or pH. However, exchangeable cations increased after lagoon DE applications, while soil Na content was higher with both types of effluent applications.

There was a higher risk of pathogen bacterial indicators dissemination and survival in soil with the application of RDE than that with LDE, accounting for a role in this sense of lagoon storage.

Despite DE being a hotspot for the development of antimicrobial resistance, the fact that at least the ARGs evaluated were not detected in the soil underlines the importance of applying DE to a pasture avoiding their disposal to water.